Full text search engines got their start with WebCrawler, created by Brian Pinkerton in 1994. It was a desktop application to start with and had 4,000 hand aggregated websites for its database. That’s a far cry from the 3.5 billion webpages that World Wide Web reports today, with fancy talk of organic search engine optimization and more apps than you can shake a stick at these days. Search engine optimization has evolved over the years alongside the influence of the search engines they utilize for traffic in a fascinating historical journey.

SEO in the Beginning

It took a few years after WebCrawler for Yahoo and Google to come onto the scene. Yahoo had its portal website set up in 1995, providing links to many categories of web pages. Google didn’t show up until 1998, along with the DMOZ. Search engine optimization didn’t get into full swing until there were more search engines and directories that used algorithm-produced results, as opposed to human-selected listings.

Marketers took advantage of Yahoo’s dependence on alphabetical sorting in 1995, and began looking at the way search engines sorted their machine-generated listings in 1996. Keyword density was a major factor then, along with website age. Marketers used a variety of techniques to put as many instances of their keywords on their page as possible, using white text on white pages, filling up meta data and taking other actions that are considered spam today. Excite’s algorithm was cracked and revealed the 35 different factors that went into ranking those pages.

SEO Over the Past Decade

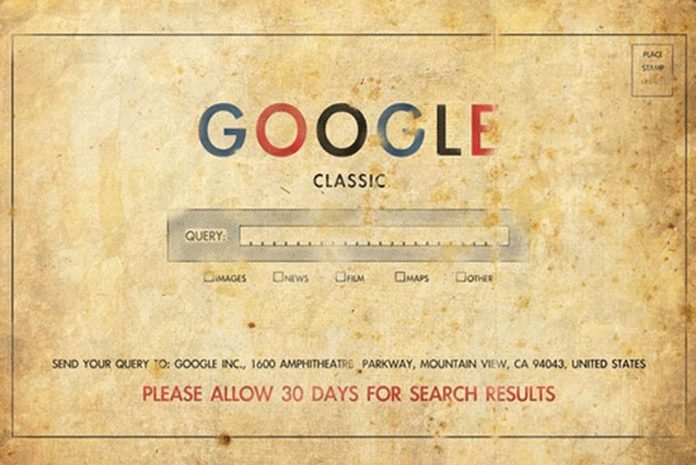

Google makes hundreds of algorithm changes these days, but it was a simpler time when Google came up with the PageRank algorithm. It got updated monthly and adjusted the rankings during that time period. Most search engine optimization was still centered on spam techniques, such as buying massive amounts of in-bound links, filling up footers with links and any other technique that could be thought of to bring in more inbound links.

Google took a stand against spammy sites with November 2003’s Florida update. Many top sites vanished from the face of the search engines, and webmasters around the world panicked. Google specifically focused on websites that went too far with search engine optimization, and took them out of the index. As time went on, Google continued to adjust their search engine rankings to reward sites with good content. Content-based marketing became popular as a way to increase relevancy, although it wasn’t always useful content for readers. Instead, it stuck to strict keyword percentages to stay out of the spammy range.

The year 2005, was the first time personalized results were introduced to the search engine. It changed the results shown in the search engine rankings by whether or not the person was logged into their Google account. That made it harder for webmasters to figure out what ranking everyone saw them as.

These days, video and social media marketing work well, as Google’s unified search brings together results from all of their channels. Go forth and conquer!

Benjamin C is a technology reporter who covers Internet-related topics.